In our first instalment, we journeyed through the evolution of data storage from traditional Data Warehouse to data lakes, leading to the emergence of the Lakehouse architecture. Now, let’s unpack the inner workings of the Data Lakehouse architecture and explore the intricate layers and components that define a modern Lakehouse.

The architecture principles of a Data Lakehouse:

-

Discipline at the Core, Flexibility at the Edge –

Data storage layers must be structured with strong governance and management. However, transformation and consumption layers should remain flexible. For example, merging raw data with transformed datasets to build ML models. -

De-couple Compute and Storage –

Unlike older architectures that tightly coupled processing and storage, Lakehouses separate compute and storage. This enables scaling either independently, similar to modern laptops where processor and storage configurations are independent. -

Focus on Functionality Rather than Technology –

A Lakehouse should support BI, AI/ML, ETL, and streaming use cases. Technology evolves rapidly, but business data use cases remain consistent. Designing for functionality ensures long-term adaptability. -

Modular Architecture –

Components can be replaced or upgraded without impacting the entire platform. Services are instantiated based on specific functional requirements. -

Active Cataloguing –

Without cataloging, data lakes risk becoming data swamps. Metadata management ensures discoverability and governance.

Five major components of a Data Lakehouse:

- Ingesting and processing data

- Storing and serving data

- Deriving insights

- Applying data governance

- Applying data security

In this blog, we focus on ingestion, processing, storage, and serving layers.

Ingestion Layer: Blending Flexibility with Structure

Data ingestion is one of the most critical components of the architecture. Challenges commonly observed include:

- Time Efficiency – Manual mappings reduce engineering productivity.

- Schema Changes – Minor upstream changes can severely impact downstream systems.

- Changing Schedules – Interdependent pipelines can disrupt predictive models.

- Managing Streaming and Batch ingestion simultaneously.

- Data Loss during system synchronization.

- Duplicate Data from re-running failed jobs without safeguards.

The Lambda Architecture pattern is well suited for addressing these complexities.

Lambda Architecture combines Batch, Streaming, and Consumption layers:

- Batch Layer: Raw data is stored and processed using distributed computing engines. Processed outputs are stored in both processed data stores and made available for serving.

- Streaming Layer: Real-time data is ingested as event topics, processed in micro-batches, stored, and served downstream.

- Publish and Consumption Layer: Serves processed data to downstream consumers.

Storing and Serving the Data

Data storage must balance performance, duplication reduction, and enterprise security standards.

The four storage zones:

- Raw Zone (Bronze): Stores data in original formats such as CSV, Avro, ORC, XML. Maintains structural integrity of source data.

- Enrich Zone (Silver): Applies transformations, standardization, consolidation, and cleansing. Serves as recovery and intermediate processing layer.

- Consume Zone (Gold): Houses fully processed, analytics-ready datasets for BI and ML use cases.

- Archive Zone: Long-term, cost-optimized storage for inactive data.

Common structured formats include CSV, Parquet, and JSON. Unstructured formats include MP4, JPEG, TIFF, GIF, WAV, and AVI.

For serving:

- SQL-based serving for BI and AI use cases

- API-based serving using NoSQL for real-time services

- Data sharing via APIs or data clean rooms

Conclusion: The Technical Symphony of Lakehouses

Lakehouse architecture combines modular design, scalable compute-storage separation, and multi-layered storage to support modern analytics. Understanding these components enables enterprises to build resilient, future-ready data platforms.

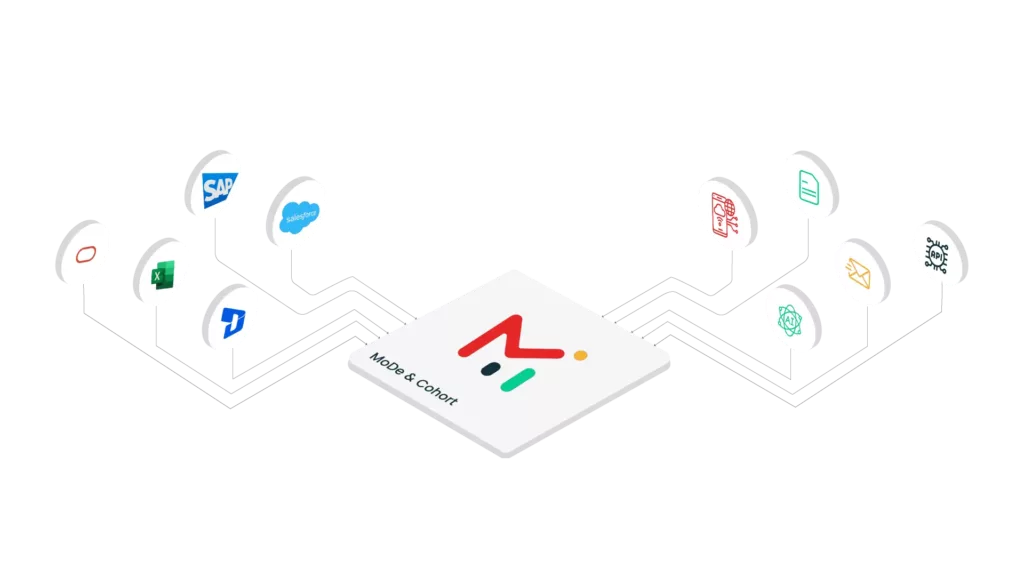

Midoffice Data simplifies Lakehouse complexity by delivering end-to-end capabilities across ingestion, storage, transformation, and serving layers tailored for enterprise ecosystems.

References

- Data Lakehouse in Action by Pradeep Menon

-

Monte Carlo – Data Ingestion

-

Databricks – Data Lakehouse